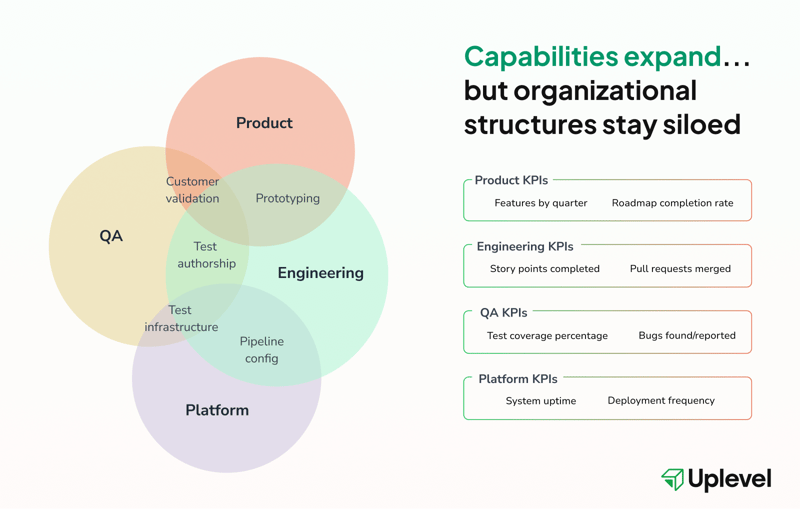

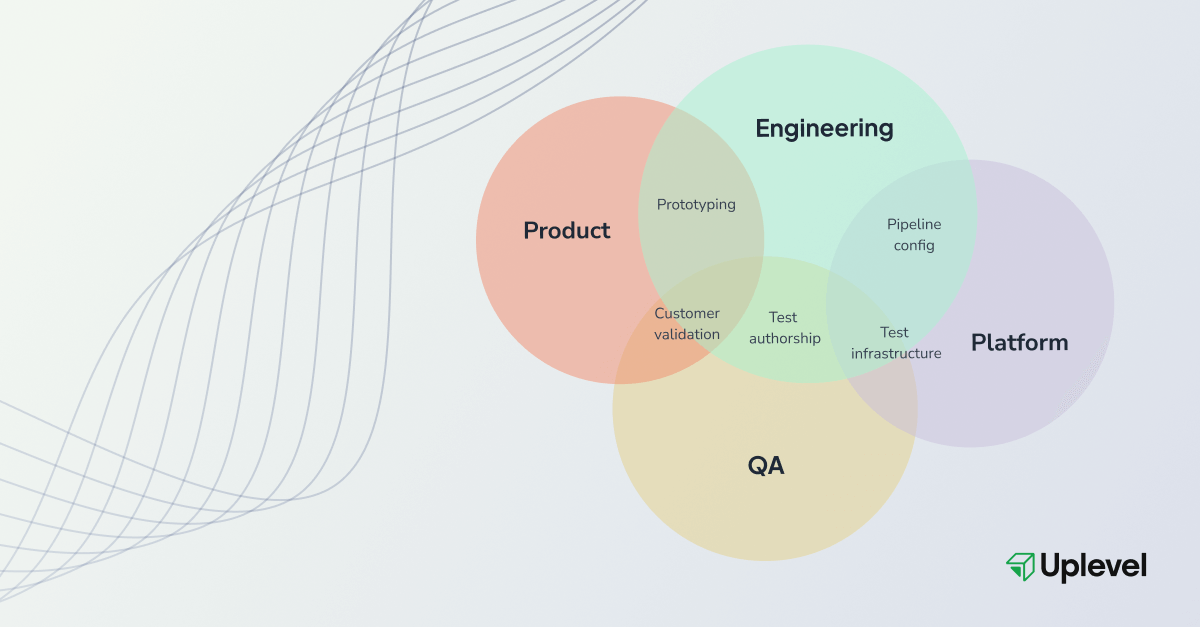

It’s 2026. Engineers can prototype features, write comprehensive test suites, and configure deployment pipelines. Product managers can validate technical assumptions without engineering cycles. QA work like test authorship requires less specialized knowledge now that AI takes on more of the load.

Who owns outcomes when AI blurs capabilities across the entire engineering org structure? Most organizations haven't answered this question because they're still measuring people for jobs that no longer exist in their original form.

Consider an engineer who uses AI to prototype a new checkout flow. She instruments it to track conversion rates, analyzes drop-off patterns, and iterates based on customer behavior. She has everything needed to make product decisions — except the authority.

So her prototype sits in limbo because nobody's job description covers "validate customer hypotheses with working code." And meanwhile, her KPIs are story points completed — because her job description says that the job of a software engineer is to ship code.

In this scenario, it’s not hard to see the sheer potential of AI to transform engineering and the team structures that disincentivize that transformation.

Why Systems Can't Keep Up

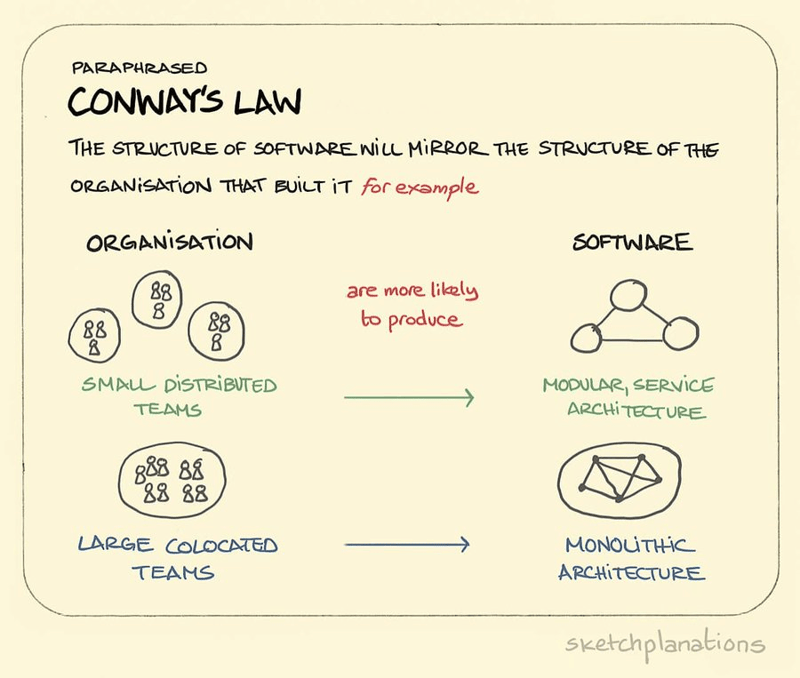

In 1967, Melvin Conway observed that organizations design systems that mirror their communication structures. Software architectures end up looking like the org charts (topologies) of the teams that built them, as Martin Fowler later clarified.

AI is expanding what teams can do, but they won’t reap those rewards as long as engineering team structures stay fixed. For example, product and engineering originally designed handoff processes for separated capabilities. And the entire notion of a “QA engineer” building testing frameworks assumes that engineers don’t have the capability to own quality.

The technology locked in around those assumptions. But when AI disruption is rapidly shifting capabilities into new territory, sociotechnical systems remain anchored to outdated models — blocking the very work they were designed to enable.

As a result, the 10x contributions that AI theoretically unlocks don't get done because it's unclear who owns them. Value leaks through the gaps between functional boundaries while everyone optimizes for metrics that no longer measure what matters.

Three Team-Structure Boundaries Under Pressure

Product and Engineering Work Now Overlaps

Engineers can instrument features to track adoption, analyze usage patterns, and draft requirements from behavioral data. Product managers can prototype ideas and validate technical feasibility without consuming engineering cycles.

Organizations where product and engineering share a high degree of ownership for customer outcomes achieve 60% higher returns to shareholders and 16% higher operating margins. The capability for shared ownership exists… but organizational structures rarely support it.

Take instrumentation. When engineers add tracking to measure feature adoption, they suddenly have access to data that traditionally lived with product teams. They can see which features get used, which get ignored, which create friction. But if their performance reviews only measure pull requests merged, it creates no incentive to act on customer insights. Product continues owning adoption metrics while engineers who could influence those metrics optimize for throughput.

"AI is not going to bring the value that it could because it's not being pointed at the problems that it needs to be pointed at. Everybody thinks it's somebody else's job."

The QA Separation Is Harder to Justify

Test authorship historically required specialized knowledge — testing frameworks, edge case identification, test suite maintenance. That difficulty justified separating QA from development.

AI collapses that justification. Engineers today can write comprehensive test suites as easily as application code. The person writing the code has the deepest context for testing it, making them the natural quality owner. In most cases, separating test authorship from feature development creates lag and context loss: Code gets written. Days pass. Someone who wasn't in the room when design decisions were made writes tests for it.

Many organizations still structure QA as downstream validation, creating split ownership. Engineers optimize for feature velocity because their metrics track deployment frequency, and QA optimizes for test coverage percentages that don't correlate with software quality. When quality issues surface in production, everyone points at someone else.

Platform Work Is Less Distinct

AI makes deployment configuration, observability tooling, and production instrumentation accessible to product engineers. Platform teams, however, built systems mirroring organizational structures from 5-10 years ago — with centralized control, restricted access, and approval workflows.

Engineers now have the capability to configure pipelines and add instrumentation. The systems block them. Permissions require tickets, production access needs approvals from teams who lack context, and engineers who could move faster hit scaffolding built for an earlier era. Features that could deploy in hours take days waiting for approvals, consuming most of the gains from an expensive AI investment.

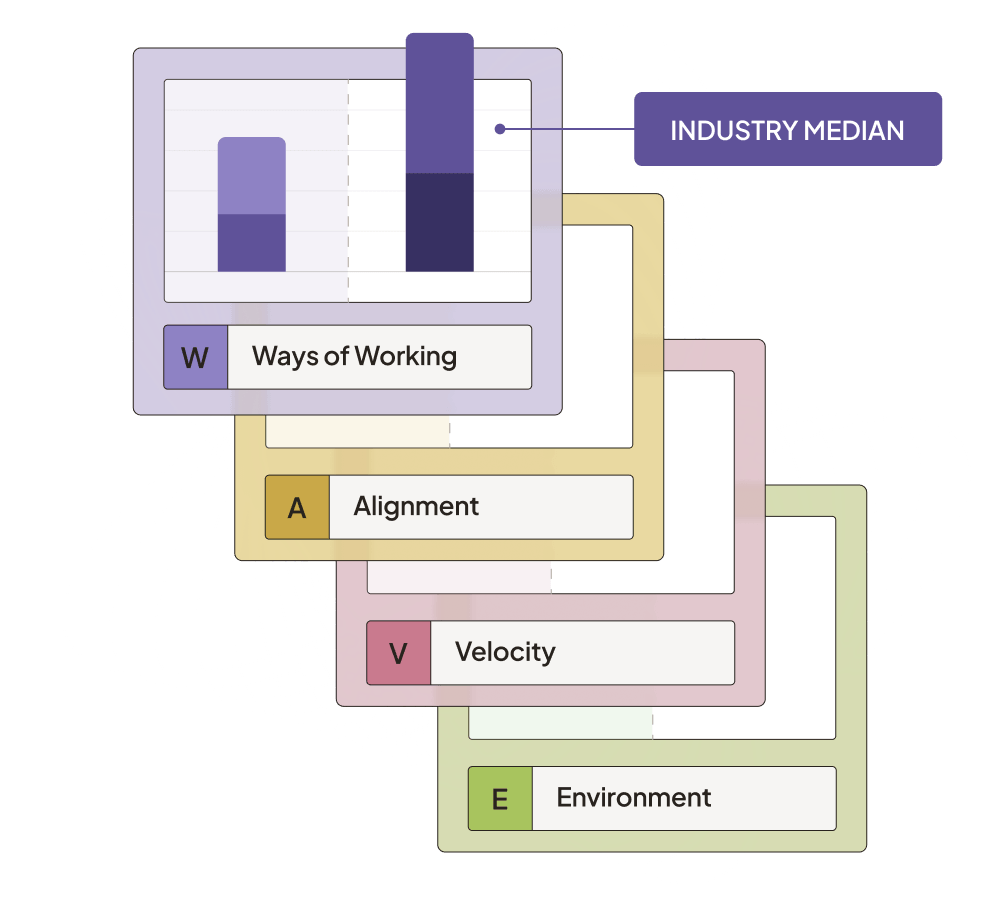

Rethinking Measurement

Story points and pull request counts measure effort rather than outcomes. AI makes this disconnect obvious. It’s not hard anymore to rack up story points on work that never ships, let alone on features no one uses.

Historically, the most meaningful signals of value — adoption rates, customer behavior patterns, feedback loop duration — typically flowed to product teams rather than engineering. Engineers, on the other hand, might focus on maximizing output because their dashboards tracked deployment frequency.

But in the blurred boundaries of the AI era, this creates optimization for the wrong things.

What to Do

Map capabilities against ownership

List where engineers can work now that they couldn't a year ago. Prototyping? Test authorship? Pipeline configuration? Compare that against current role definitions and performance metrics. The delta tells you where value is leaking.

Realign role expectations with expanded capabilities

Decide which expanded capabilities matter for your business priorities, and update what you measure accordingly — if you want engineers validating product assumptions, give them customer data access and put adoption metrics on their performance reviews.

Pair expanded roles with structured upskilling. An engineer configuring pipelines, for example, needs to understand CI/CD best practices and security implications. Create internal resources, establish mentorship from platform teams, and build review processes that transfer knowledge rather than just gate access.

Make customer data visible across functions

Usage patterns, adoption metrics, and feedback should inform engineering decisions alongside product roadmaps. Direct feedback loops between code and customer behavior drive better decisions about what to build next.

Restructure communication before restructuring teams

Your systems will keep mirroring your org structure until you change how teams actually work together. Engineers who own quality need direct lines to customers and support rather than QA intermediaries. Product and engineering sharing ownership need shared metrics and goals rather than separate scorecards.

Start with one team where boundaries have already blurred informally. Update their metrics and ownership structures to match actual work patterns. Learn what works before scaling.

"What organizations need to focus on is how to become an adaptive learning organization — one that senses where the needs are and is able to deploy resources to address them."

Adapt or Waste the Investment

AI has already expanded engineering capabilities into product territory, QA work, and platform management. The boundaries have blurred. Organizations extracting value are restructuring metrics, updating role expectations, and redesigning communication patterns to match what people can actually do.

Teams must change how they structure work around the technology. The mismatch between capabilities and ownership will widen until you address it directly. Otherwise, you'll keep wondering why your AI investment delivers underwhelming results.

.png)