Despite using various measurement frameworks, many engineering leaders fall into the trap of measuring what's easy rather than what's meaningful.

Most measurement approaches treat engineering as a purely technical practice. They assume you can optimize it through technical metrics alone. But engineering organizations are sociotechnical systems. Human collaboration, communication patterns, and environmental factors matter just as much as code deployment statistics.

Engineering organizations already have plenty of data. What they lack is a clear framework to interpret that data and drive meaningful change.

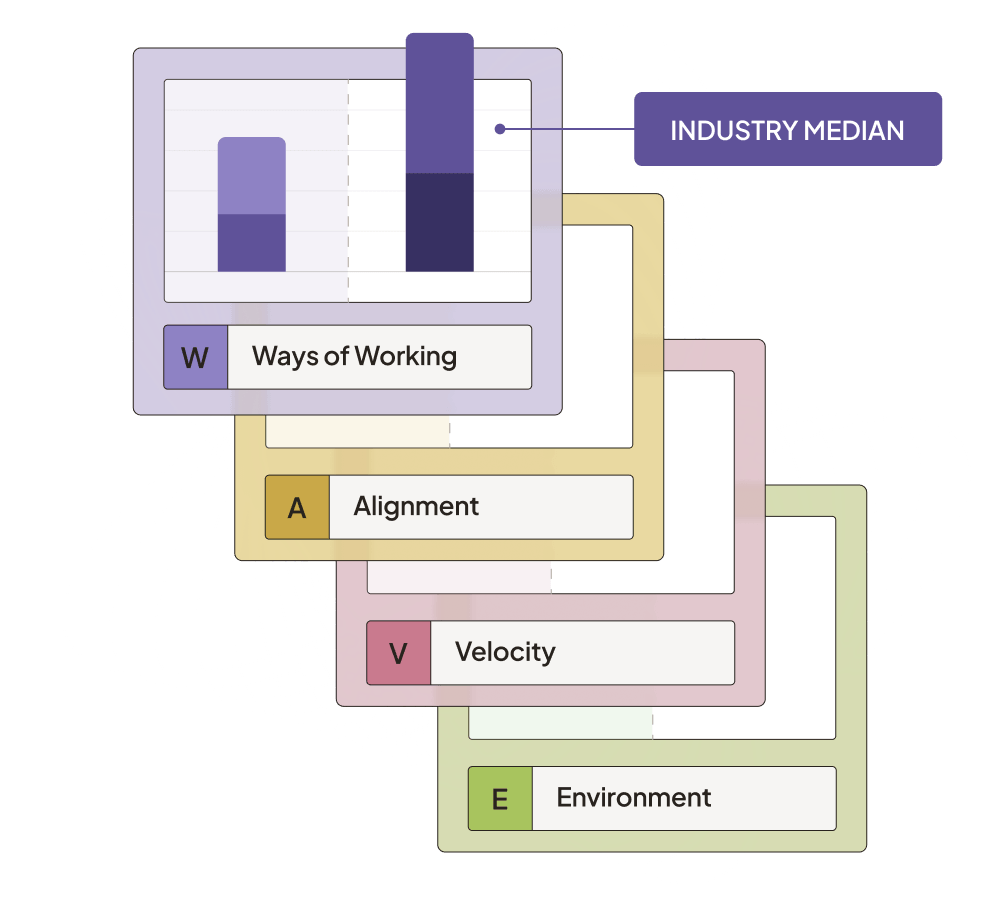

Uplevel's WAVE Framework can transform your engineering metrics from mere measurements into actionable insights that drive real improvement.

What’s wrong with traditional engineering KPIs?

Many organizations collect metrics without understanding what they're trying to achieve. Traditional KPIs often create an illusion of control. They fail engineering leaders in several ways:

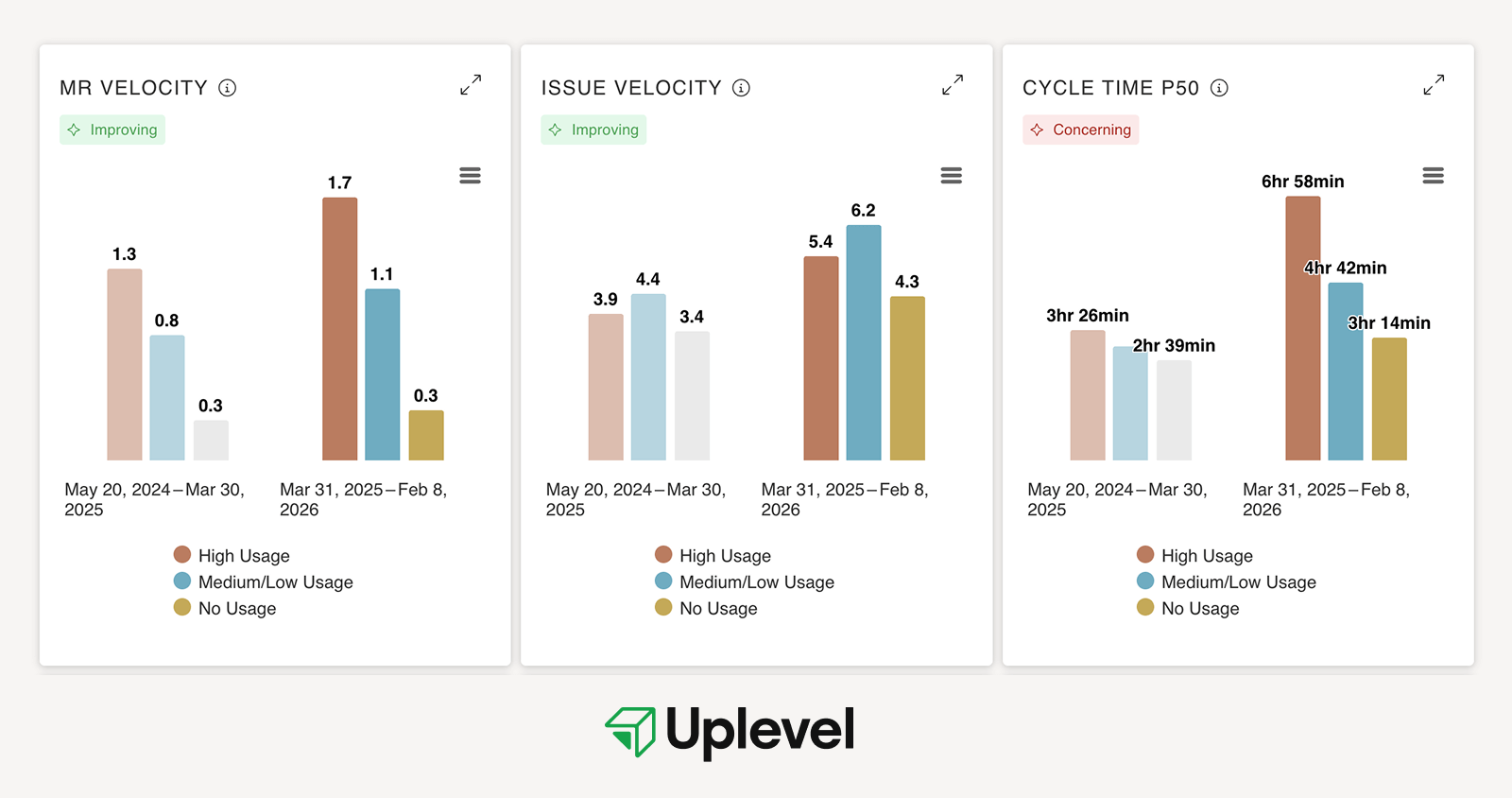

- Little correlation between metrics and business value: Engineering leaders often track metrics like PR counts, story points completed, or PR velocity. But these metrics don't reflect whether anything meaningful is actually shipping. In organizations with AI coding tools, this problem gets worse: high AI users show significant increases in merge request and issue velocity — exactly the numbers executives see as proof of ROI — while deployment frequency and value delivered to customers remain flat.

- Overreliance on lagging metrics: Frameworks like DORA give you valuable insights, but these are backward-looking measurements. For engineering leaders under pressure to improve future performance, understanding that deployment frequency was low last quarter offers limited guidance on what to change now.

- Limited ability to act on the data: Research by Dr. Nicole Forsgren (co-author of Accelerate) shows that without context about organizational structure, team interactions, and environmental factors, technical metrics alone aren't enough to diagnose performance differences across teams.

These limitations leave engineering leaders with plenty of data but insufficient guidance on what to change.

A holistic approach to engineering measurement and change

Unlike frameworks that focus narrowly on deployment statistics (DORA) or that provide theoretical models without clear measurement approaches (SPACE), WAVE addresses the full spectrum of factors that influence engineering effectiveness. Most importantly, it recognizes that engineering KPIs are interconnected. Improvements in one area cascade through the entire system, creating more capability.

WAVE is based on our data science findings and deep experience partnering with engineering leaders. Each category below offers a small group of dimensions and metrics that provide opportunities for actionable intervention. WAVE provides manageable clarity while still addressing the complexity of a sociotechnical system.

The WAVE Framework consists of four interconnected components:

-

Ways of Working: Measures cultural elements that enable delivery

-

Alignment: Captures how well engineering efforts connect to business value

-

Velocity: Tracks the flow of work through the system

-

Environment Efficiency: Evaluates system quality and friction

Each dimension of WAVE is summarized by a key engineering metric — a lagging indicator in that area. This metric is an outcome of the inputs that enable good engineering, which are leading indicators.

For example, improving engineering team health KPIs like psychological safety, meeting cadence, and mission alignment will naturally result in less overwhelm and more time developers spend in deep work.

Instead of treating engineering KPIs in isolation, WAVE recognizes the connections between different aspects of engineering work. Besides coding, engineering consists of all your team's interactions with the product, users, and cross-functional teams.

The WAVE Framework creates a diagnostic map that helps engineering leaders understand the relationship between different dimensions of performance. This enables targeted improvements rather than isolated optimizations.

Ways of Working

The Ways of Working Metrics capture the cultural factors and behavioral factors that enable delivery. Using Ways of Working, we recognize that engineering performance begins with people and team dynamics.

Deep work

Deep work metrics track the average number of daily uninterrupted hours developers can dedicate to focused coding time. Cal Newport's Deep Work shows the importance of uninterrupted focus for complex cognitive tasks like software development.

This concept is further supported by studies from the University of California. They found that after an interruption, it takes an average of 23 minutes for knowledge workers to return to their original task. For software engineers, context switching is particularly costly. Frequent interruptions lead to increased defect rates and longer completion times for complex programming tasks.

Team health

Team health metrics provide a consolidated view of engineering teams' psychological safety, collaboration effectiveness, and overall engagement. This approach is grounded in Google's Project Aristotle research, which identified psychological safety as the most important factor in team effectiveness.

In software engineering specifically, a 2024 study in Empirical Software Engineering found that teams with established psychological safety were more invested in software quality. The study showed these teams demonstrate "collective problem-solving, pooling their collective intellectual efforts and experience to tackle quality-related challenges."

When you track team health over time, you can identify early warning signs of burnout, disengagement, or collaboration challenges before they impact delivery.

AI maturity

AI maturity captures organizational capability for AI success: clear SOPs, coherent toolsets, compliance posture, and leadership direction. It's one of the most revealing dimensions in WAVE right now, because it surfaces a gap most organizations don't know they have.

Uplevel's AI Measurement Crisis survey of over 100 engineering leaders found that 88% describe themselves as "AI ready" — but only 2% have the measurement capabilities to back that claim up. The gap isn't typically tool access, it's clarity: what are we using AI for, which use cases show ROI, and how do we know?

Organizations with high AI maturity answer those questions systematically. They've established SOPs for tool usage, defined best practices by role and use case, ensured compliance and security, and aligned leadership on AI strategy. Developers spend time generating value, not deciding which tool to use or whether using it is sanctioned. Teams with lower maturity experience the inverse: organizational drag, inconsistent adoption, and AI activity that looks productive without connecting to delivery outcomes.

Improving AI perception leading indicators — guideline clarity, toolset coherence, leadership alignment — directly reduces this drag and creates the conditions for AI investment to compound rather than plateau.

More on Ways of Working

How Ways of Working Underpin Engineering Success

Ways of working determine engineering delivery outcomes more than tools and talent. Four coordination patterns that amplify organizational capability.

Deep Work Is a Multiplier for Engineering Organizations

Deep work time is critical for software engineering teams. Here's how leaders can measure it to help their teams deliver on high-value projects.

Alignment

Alignment is how leaders determine whether engineering capacity and effort translates into meaningful business outcomes. This dimension exposes the gap between what teams build and what drives actual value creation.

Allocation of effort

Resource allocation metrics track the actual distribution of engineering effort across new value creation, technical debt, and maintenance work. Unlike self-reported time allocations, data-driven measurements provide an objective view of where engineering time is actually spent.

In most organizations, developers believe they spend more time on new features than they actually do when their work is objectively analyzed. Our own research puts the average time spent on new value creation at just under 20%. That's one day out of five.

Planning effectiveness

Planning effectiveness reflects how well teams understand their work, capacity, and alignment with evolving priorities. It measures requirements churn, clarity of prioritization, connection to business value, epic lead time, and plan phase duration.

When teams consistently deliver what they commit to, it suggests a healthy balance between ambition and realism. Stable requirements indicate clarity in what needs to be built. This minimizes churn and rework that delay value delivery.

As always, however, context matters. These metrics should not be treated as success criteria on their own. A high sprint completion rate, for instance, could mask underlying issues if teams are playing it safe by undercommitting. It could also hide problems if they're delivering work that's no longer relevant due to shifting priorities.

Instead, planning effectiveness is a signal to detect misalignments in team capacity, requirement clarity, or cross-functional communication. When planning metrics fluctuate significantly, it may indicate that teams lack the information or autonomy needed to make reliable commitments. This can delay or derail the delivery of customer value.

User alignment

Uplevel's user feedback cycle score measures how quickly teams receive and incorporate user feedback after releasing features. It tracks frequency and type of user engagement, user feedback cycle time, and customer satisfaction scores.

Short user feedback cycles are a leading indicator of engineering alignment to value. They create a continuous loop of validation between what is being built and what users actually need. When feedback is rapid and frequent, teams can confirm whether their work delivers meaningful outcomes. This enables faster course corrections.

We find this is one of the most underrated metrics. If your team doesn't get feedback or gets it too late, information is probably getting locked between departments.

More on alignment

Leveraging Customer Problems for Better Engineering Outcomes

Learn how Jason Yip keeps his teams focused on engineering outcomes by being problem obsessed.

Story Point Estimation Doesn't Work

Story point estimation falls short in estimating developer time. Learn how modern software development teams are moving beyond Agile for planning.

Velocity

Velocity measures how efficiently work moves through your engineering system. True velocity assessment requires understanding both throughput rates and the friction points that create delays and coordination overhead in your development process.

Velocity score

Uplevel's velocity score combines PR cycle time, PR velocity, issue velocity, and deployment frequency (where available) into a comprehensive throughput measurement. This consolidated view reveals whether teams can consistently deliver completed work rather than just generate activity.

When evaluating velocity metrics, avoid comparing teams against each other. Teams operate under different contexts — varying codebases, workflows, review cultures, and priorities make cross-team comparisons misleading. Instead, compare each team's current performance against its own historical baseline to identify genuine improvement opportunities.

One additional complication worth tracking: in organizations with AI coding tools, PR complexity often increases significantly — in some cases doubling for high AI users — which extends review cycles even as raw coding speed improves. Velocity metrics interpreted without PR complexity data can misread this as a review problem when it's actually a batching and integration problem.

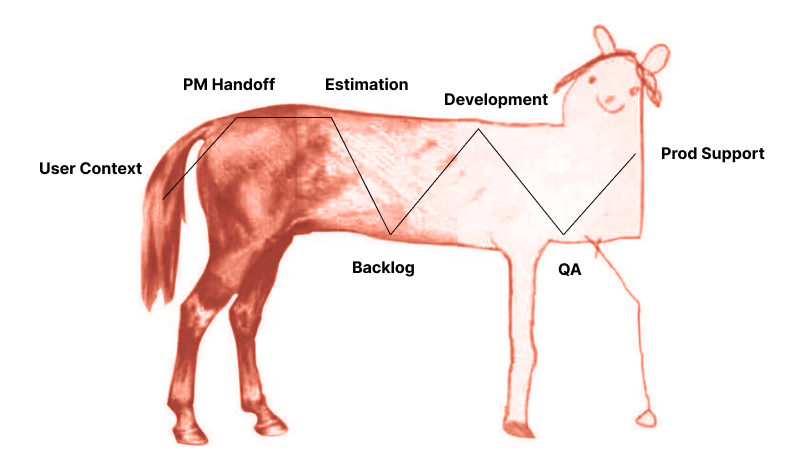

Handoffs

Handoff metrics evaluate both frequency and quality of work transitions between teams, individuals, or process stages. Each handoff introduces coordination overhead and potential communication gaps that slow delivery and increase error rates.

Research shows that minimizing handoffs through cross-functional teams can result in significant improvements. One McKinsey study documented a 45% decrease in code defects and 20% faster time to market after switching to cross-functional teams that reduced coordination dependencies.

Most organizations underestimate handoff costs because the delays appear as waiting time rather than active work. This makes them invisible in traditional productivity measurements.

Pull request reviews

Pull request review metrics examine PR complexity, PR quality, and review quality/time to assess both work structure and collaborative effectiveness. This measurement reveals whether teams create reviewable code changes and conduct meaningful peer evaluation.

PR complexity tracks oversized changes that create review bottlenecks. Large PRs are harder to review thoroughly. This increases defect rates and cycle times. PR quality measures whether changes include proper descriptions, link to tracking systems, and maintain reasonable cycle times.

Review quality evaluates whether the collaborative process catches meaningful problems. Effective reviews identify functional defects and architectural issues when remediation costs are lowest. They don't just catch style preferences that automated tools can address.

Teams with high PR quality and effective review processes ship faster with fewer production issues. Those with poor practices accumulate technical debt and spend more time fixing downstream problems.

More on velocity

There's a Better Way to Measure Developer Velocity

When developer velocity becomes the goal, Goodhart’s Law takes effect. Learn why high-performing teams focus on holistic measures.

How to Reduce Cycle Time

Learn how to reduce cycle time and allow your teams to quickly address bugs, ship faster, and avoid work piling up.

Warm Engineering Handoffs Won't Fix Your Delivery Problems

Why warm engineering handoffs won't solve your delivery issues. Explore the root causes and solutions for optimizing your software development process.

Environment Efficiency

Environment efficiency measures how well your engineering system supports productive work and quality outcomes. These metrics help identify structural blockers to effectiveness that exist beyond individual teams.

Recovery

Recovery metrics combine lead time for changes, change failure rate, and mean time to repair (MTTR) — three of the four DORA metrics — to assess system resilience. These measurements reveal how quickly teams can deploy fixes and maintain stability under operational pressure.

Organizations with faster recovery capabilities demonstrate robust testing, monitoring, and deployment automation that enable rapid issue detection and resolution. The 2023 DORA report specifically highlighted that elite performers excel not just in deployment metrics. They also build cultures that support sustainable delivery.

Code quality

Code quality consolidates bug rates, customer-found defects, cyclomatic complexity, and support escalations into integrated quality assessment. This recognizes that quality issues compound. High complexity increases bugs, driving support escalations and customer-found defects.

Detecting defects earlier in the development process reduces the cost of remediation by orders of magnitude. Defects found in production can cost 100x more to fix than those found during code review. This makes upstream quality investments essential for sustainable delivery.

Friction and flow

Friction measures systemic obstacles through architecture complexity, tooling effectiveness, deployment processes, and flow optimization. These factors determine organizational drag that slows delivery regardless of team capabilities.

In knowledge work, including software development, items typically spend 70-85% of the time waiting rather than being actively worked on. This means a flow efficiency rate of just 15%. Most organizations have tolerated this because the waiting appeared as white space rather than visible inefficiency.

AI coding tools are changing that. When code generation accelerates but deployment frequency stays flat, the gap between activity and outcomes becomes harder to ignore. Organizations that assumed they had continuous delivery because they had the tooling are discovering that the practices, empowerment, and workflow changes required for real flow never happened. AI makes this visible. The question is whether leaders use that signal to fix the system or dismiss it as a pipeline problem.

More on Environment Efficiency

The CI/CD Gap Holding Back Your AI Investment

AI CI/CD bottlenecks prevent most orgs from capturing efficiency gains. Learn why deployment stays flat even as velocity doubles — and what to fix first.

Beyond Flow Metrics: A Holistic Approach to Software Value Delivery

Flow metrics and DORA look at software delivery through two very different lenses. Use both to optimize processes, identify bottlenecks, and drive value.

How WAVE helps engineering orgs build capability

Implementing the WAVE framework doesn't stop at collecting better metrics. The real change lies in how engineering organizations understand and improve. Sustainable transformation requires both data and capability building.

“Having data helps the conversations I have with teams. 'You didn't work on these goals this quarter. Why was that? What can we do to increase the time you're delivering value?' Then we can take action. So that's a lot of the work we're doing with Uplevel.”

Francisco Trindade, VP Engineering at Braze

As you consider your own organization's effectiveness, ask yourself: Do you have visibility into all four WAVE dimensions? Can you identify which dimensions currently limit your performance? And most importantly, do you have a methodology to turn those insights into sustainable improvement?

As engineering systems grow more complex, the organizations that succeed recognize effectiveness as an ongoing practice. It requires attention to the technical, social, and environmental realities of how teams work. When engineering leaders shift from isolated metrics to the integrated WAVE approach, they transform measurement from a reporting exercise into a powerful catalyst for sustainable improvement.

.png)

.png)